Start Small, Learn Fast: A Human-Centered Data Pilot

Where Human Insight Meets Data Systems

Many nonprofit programs rely on data that is collected, verified, and prepared by humans. Though this data is critical to program operations, impact measurement, and reporting, it is often managed by manual or loosely connected processes that make validation, reconciliation, and analysis difficult at scale.

This case study explores how lightweight systems can be introduced to support these workflows - not replacing human input, but making it more consistent, trustworthy, and usable. It focuses on practical approaches to supporting data collected by humans with automation that assists with processing and validation, while keeping review and judgment in human hands.

Using the WATCH court monitoring program at the Advocates for Human Rights as an example, the case study highlights approaches and lessons that are broadly applicable to programs where humans are central to data collection.

A Case Study: Court Monitoring Data Collection

The WATCH program, led by the Advocates for Human Rights, uses court monitoring to advocate for the human rights of survivors of gender-based violence, including domestic abuse, sexual assault, and sex trafficking.

As part of its court monitoring work, The Advocates relies on a long-standing process in which volunteers observe court hearings and record observations about the operations, safety, and accessibility of court proceedings, trial outcomes, and more. Over time, this process has generated a large volume of data that is valuable for identifying trends, informing advocacy, and supporting accountability efforts.

The existing WATCH workflow has been refined over time to:

Collect information and observations based on volunteers monitoring live court proceedings

Apply manual updates to court observation data based on separate reviews by staff and volunteers

Manual reviews of court monitoring records are primarily conducted by comparing court observation data with data from existing court records systems using a web portal to look up and verify hearings and cases one by one.

While the data collected through WATCH is incredibly valuable, a few factors make it challenging to validate and analyze at scale. These include:

Data sourcing - Volunteers collect valuable information that can only be observed in court, but are also tasked with entering information that could be pulled more accurately and efficiently from court records. This means Advocates’ staff spend time correcting monitoring data by comparing to court records, while court monitors spend time recording administrative details instead of capturing in-depth observations about the hearings they attend.

Data entry consistency - Differences in formatting and use of free-text or “Other” responses means data must be cleaned and standardized to support analysis.

Level of data collection - WATCH analyzes data at both the hearing and case levels, but real-world constraints mean that case information is captured at the hearing level, creating complexity in reconciling and tying data together across hearings, cases, and defendants in order to perform analysis.

The WATCH program is expanding and gaining visibility. To amplify its impact, the need for robust and trusted data to support its work is critical. The Advocates recognized the need to explore what a more effective, scalable hybrid data collection process could look like in the future.

The Approach? Start Small.

When considering how to incorporate automation into data collection processes, consider starting small, rather than jumping straight to a full system overhaul.

The Advocates opted to start with a focused pilot, built into existing workflows, and designed to improve the quality of data outputs while better understanding the limitations of the current process, testing approaches to addressing current issues, and generating learnings to inform a broader redevelopment of its data collection processes.

The pilot focused on a subset of data points collected during court observation where inconsistencies were most likely to affect analysis, and where accurate data was available using existing court records systems.

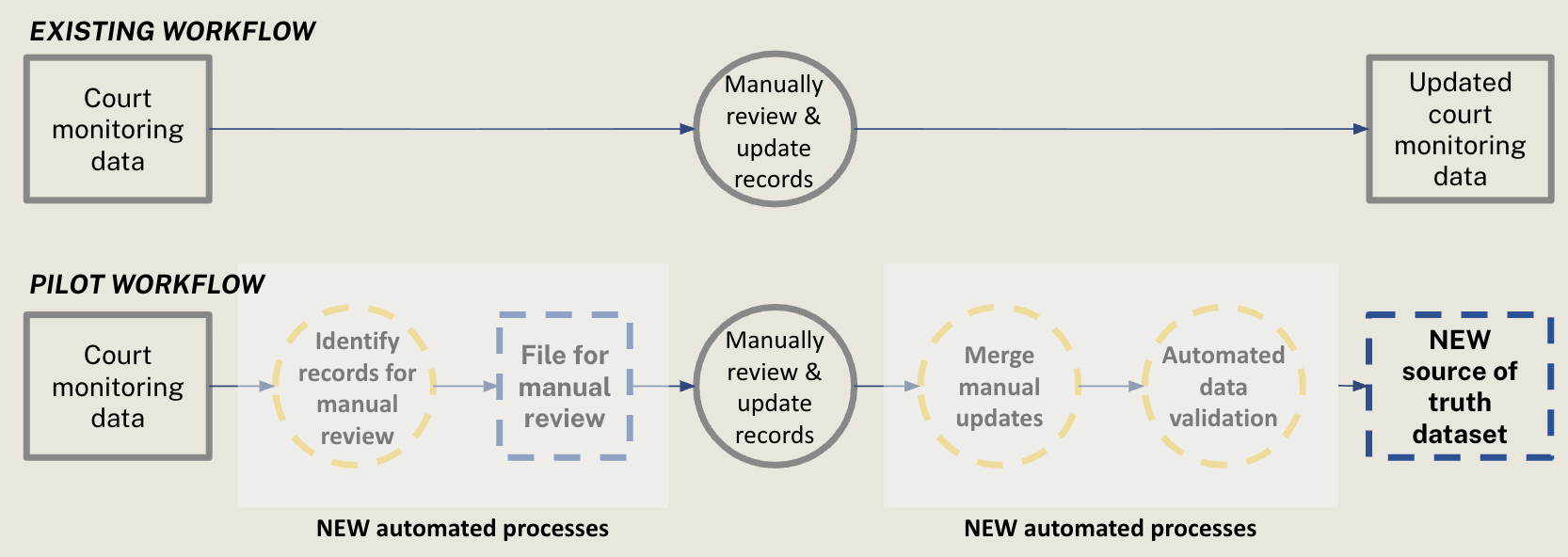

Rather than replacing the current system, the pilot introduced a supplemental review layer which could ingest court observation data, flag and export records requiring manual review, merge updates with source data, apply automated validation rules, and maintain a clear record of all data updates made. The result is a single source of truth dataset which contains trusted data for impactful analysis.

Lightweight Tools, Thoughtful Design

To deliver the pilot, two low-cost, lightweight tools were integrated into the existing WATCH data system:

Hex.tech was used to create a simple, repeatable data pipeline that supports both automation and human review:

Automatically pulling in up-to-date court monitoring data from Google Sheets.

Identifying records that require manual review and creating a file that staff can easily review and update.

Ingesting staff updates and applying automated data validation, performing calculations, and standardizing data.

Combining all updates into a single source of truth used for ongoing reporting and analysis, while maintaining a record of all data changes.

Google Apps Script was used to directly manage and protect data within Google Sheets:

Making sure key data used in reports stays intact when data is refreshed, so reports don’t break.

Locking form responses which should not be edited during the review process.

Applying clear formatting and visual cues to guide staff and volunteers when entering or reviewing updates.

Accompanying documentation and training helped to ensure the staff were up to speed on new processes, and that reviews happened consistently.

The result is a clear, step-by-step workflow that incorporates data entry, manual reviews, and automated updates to arrive at more accurate, trusted data to inform WATCH’s work.

The diagram maps the existing and pilot workflows side by side, highlighting where automation was intentionally introduced to reduce friction while preserving human judgment.

Small Changes Drove Meaningful Results

While the pilot was not designed to replace the existing WATCH data system, its results demonstrated the value of a more intentional, end-to-end approach to data collection and validation.

The pilot successfully established a practical approach to supporting human-entered data with automation. By adding a lightweight review layer and clear validation checks, the process produced a trusted, analysis-ready dataset while preserving the human judgment essential to court monitoring work.

The pilot also increased confidence in the data used for reporting and analysis. By verifying a targeted subset of high-impact fields and maintaining a clear audit trail of updates, trust in the resulting data was improved, and analysis made easier.

Just as importantly, the pilot reduced ambiguity around future system design. Rather than relying on assumptions, the organization now has actionable insights about which elements of the workflow benefit most from automation, where complexity arises, and how initial data collection choices affect downstream effort. This clarity positions WATCH to make more informed, strategic investments as the program continues to grow.

What We Learned

This pilot was intentionally designed as a learning exercise. Its primary goal was to better understand how automated data pipelines, manual review workflows, and validation logic could work together to produce trusted, analysis-ready data. Results will be used to inform a second project phase focused on longer-term redevelopment of data collection and validation processes.

By testing a technical system from data collection through review and final output, we observed where automation was effective, where issues arose, and how underlying process design choices influence complexity.

These observations surfaced broader insights about data collection, standardization, and workflow design. While grounded in WATCH’s context, the pilot reflects challenges common to many programs that combine observational data and administrative records, or automation and human review.

1) Lightweight systems can deliver outsized value

Meaningful improvements in data quality and usability do not require large-scale system replacements. By introducing lightweight, targeted technical components focused specifically on validation, reconciliation, and review, the project was able to improve reliability and clarity while working within existing tools and workflows.

2) Effective review workflows balance automation and judgment

While many validation steps can be automated, some scenarios require human interpretation. Designing workflows that intentionally combine automation with targeted manual and a clear escalation path helps maintain data quality while keeping workflows as streamlined as possible.

3) Early investment in intentional data collection pays off

The pilot reinforced that clearly defining what data is collected, why it matters, and where it should come from has an outsized impact on data quality and clarity. Focusing on high-value information, separating observational data from administrative records, and applying entry guardrails like standardized response options and formatting constraints can significantly improve data efficiency and utility.

Any secondary or manual review process also needs to incorporate the same guardrails as the original process. Without this alignment, inconsistencies are reintroduced during updates.

The pilot also highlighted the importance of creating flexibility in the level at which data can be analyzed whenever possible. For the WATCH program, data is collected at the hearing level due to real-world constraints, while many key questions are at the case-level. When data is collected at one unit but analyzed at another, system design needs to consider how that data is transformed and organized for analysis.

Additionally, in cases where information about the same entity is captured across multiple records (for example, details about a defendant recorded across multiple hearings), designing automated systems that can apply consistent rules to combine and resolve overlapping data reduces manual effort and supports more accurate, trustworthy analysis.

Pilot Insights Guide the Path Forward

Building on the pilot learnings, the next phase of the WATCH project will focus on redesigning the end-to-end data collection and review process to improve clarity, consistency, and long-term scalability. The result will be a more intentional system that captures the right information, applies appropriate guardrails, and supports reliable analysis while minimizing the effort required by staff and volunteers.

This pilot demonstrated how small, well-scoped technical experiments can create clarity in complex data environments. By testing new tools and workflows within existing constraints, the project generated actionable insights about data structure, standardization, and review processes without requiring a full system overhaul. Most importantly, it laid a strong foundation for future improvements by helping clarify what to build, why it matters, and how to approach change in a sustainable way.